I recently ran into a server, where somebody accidently issued a „chown -R www-data:www-data /var“. So all files and directories within /var where chowned to the www-data which actually means a complete system fuckup as everything from logging over mail and caching to databases relies on a correct setup there. Sadfully this was a remote production server so I had to find a quick solution to get a least a state good enough for the next days.

I started peaking around a possibity to reset file permissions based on .deb package details. There are at least approaches (the method there misses a pre-download of all installed .deb packages) to do this (and I remember running a program years ago that checked file permissions based on .deb files – just did not find it via apt-get). Nonetheless this approach lacks the possibility of handling application created files. Files in /var/log for instance don’t have to be declared in a .deb file but urgently need the right file permissions.

So I came to a different approach: cloning permissions. By chance we had a quite similar server running meaning same Linux distribution and nearly the same services installed. I wrote a one liner to save the file permissions on the healthy server:

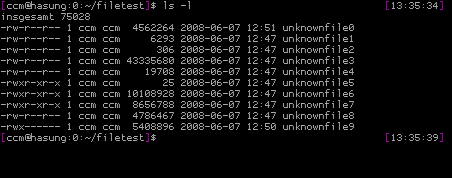

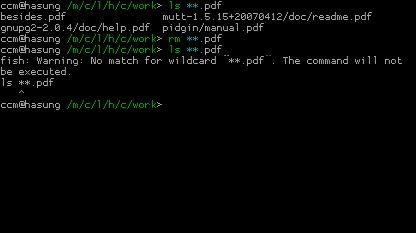

$ find /var -printf "%p;%u;%g;%m\n" > permissions.txt

The command writes a text file with the following format:

dir/filename;user;group;mode

Please note, I started using „:“ as a separator but noted that at least some Perl related files have a double colon in there name.

Now I only needed a simple shell script that sets the file permissions on the broken server based on the text file we just generated. It came down to this:

#!/bin/bash

ENTRIES=$(cat permissions.txt)

for ENTRY in ${ENTRIES}

do

echo ${ENTRY} | sed "s/;/ /g" | {

read FILE USER GROUP MODE

chown ${USER}:${GROUP} "${FILE}"

chmod ${MODE} "${FILE}"

}

done

The script reads every line of the text file, splits it’s content into variables and sets the user and group via „chown“ as well as the mode via „chmod“. It doesn’t check if a directory/file exists before chowning/chmodding it, as it actually doesn’t matter. If it’s not there, it just won’t do something harmfull.

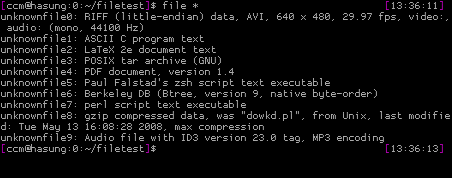

After you’ve run this, it’s a good idea to restart all services and start watching log files. You have to take care of all services that rely on fast changing files in /var. For instance a mail daemon puts a lot of unique file names into /var/spool and the script above won’t be able to take care of that. You have to double check database directories like /var/lib/mysql, hosted repositories and so on. But the script will provide with a state where most services are at least running and you get an idea of how to switch back the remaining directories. It might be helpfull to search for suspicious files, like

$ find /var -user www-data